Riding the Wave

〞Cómo citar este artículo:

APA (7ª edición)

Spencer, H. (2025, November 24). Riding the Wave. En {dp} · doble página. https://herbertspencer.net/2025/riding/the/wave. Visitado en:

MLA

Spencer, Herbert. "Riding the Wave." {dp} · doble página, 24 November 2025, https://herbertspencer.net/2025/riding/the/wave. Accedido el .

Chicago

Spencer, Herbert. "Riding the Wave." {dp} · doble página. Publicado el 24 de November de 2025. https://herbertspencer.net/2025/riding/the/wave. Consultado el .

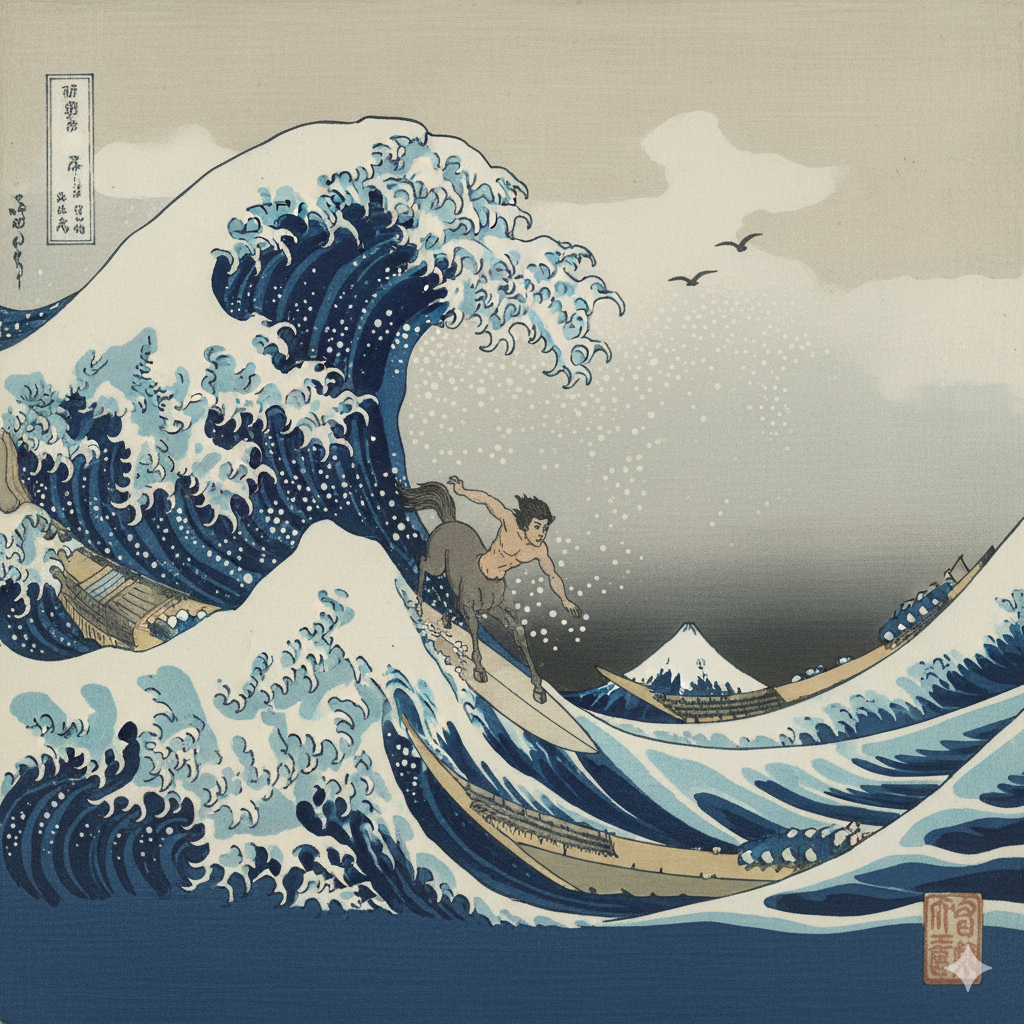

AI Turned Me Into a Centaur

We are living through a remarkable, perhaps fleeting, moment. It’s a sliver of time where Artificial Intelligence has matured into something more than a tool; it has become a true creative partner. For those of us who aren’t traditional programmers, this isn’t just an interesting development—it’s a paradigm shift. It’s an opportunity to ride a wave of technological progress that was previously inaccessible. For the first time, I feel I can focus on a development roadmap and tangible progress, rather than being perpetually stuck deciphering console errors and feeding them back to the AI. The simple fact that I can build, iterate, and innovate without being a classically trained coder is quite frankly astonishing.

The other day, whilst working on a complex application, I was conversing colloquially with my AI coding assistant in Spanish to solve a problem. In that moment, I paused. If you had described this scene to my younger self 20 or 30 years ago, it would have seemed utterly implausible, pure science fiction. This feeling is captured perfectly by a concept from Tim Urban’s ‘Wait But Why’: the Die Progress Unit (DPU). A DPU is the amount of technological progress that would overwhelm a person from a past era to the point of shock, or even death, if they were transported to the future. A chap from the 1750s brought to our time would almost certainly experience a DPU.

The reason this progress feels so jarringly fast is explained by what futurist Ray Kurzweil calls the Law of Accelerating Returns. The principle is simple: more advanced societies progress faster than less advanced ones because they have better tools with which to innovate. After all, inventions tend to compound over each other. We often make the mistake of thinking about progress linearly, looking at the last 30 years to predict the next 30. But we should be thinking exponentially. This law explains why the present feels so profoundly futuristic compared to the very recent past, and why we are living through a DPU right now.

This is where the metaphor of the “centaur” becomes so powerful. In this new reality, AI doesn’t replace us; it extends our natural capabilities, allowing us to think and create at an incredible speed1. We are the human torso, providing the vision and direction, while the AI is the powerful equine body, executing complex tasks at a pace we could never achieve alone.

My current project is pictos.net, a system that turns phrases into structured pictograms for augmentative and alternative communication (AAC). You type a sentence; it produces an SVG where every visual element is labelled, editable, and traceable to a decision. An integrated editor lets me open any SVG, inspect what the model did, and adjust what needs adjusting, so the professional remains the author of the pictogram rather than a spectator of the model’s output. A few years ago, building such a tool would have been unthinkable for me; today, with my AI partner, I am developing it mainly on my own, in the absence of human collaborators willing to enter this niche, as part of what is, after all, a doctoral project that everyone insists is a solitary journey. AI is the great equaliser, turning vision into reality without the traditional barriers.

This new ‘centaur’ approach, empowering as it is, produces a distinct kind of code. For all this enthusiasm, a critical question of nuance emerges regarding long-term software health. The creator of Linux, Linus Torvalds, perfectly captured this duality. Far from being a sceptic, he sees the immense value in this new wave of development, which he calls “vibe coding”.

Torvalds acknowledges that from a professional standpoint, it “may be a horrible, horrible idea from a maintenance standpoint, if you actually try to make a product for it”. But in the same breath, he champions it, saying, “but I think it’s a great way for new people to get involved and get excited about computers that maybe they couldn’t do otherwise. And so I actually am fairly positive about this all.” This is the heart of the matter: the very method that is opening doors for people like me also creates a new set of challenges.

Recent research2 helps explain the technical profile of this code. A study from arXiv, “Human-Written vs. AI-Generated Code”, reveals why vibe-coded projects might be tricky to maintain. And this rings true for me; I’ve noticed my AI partner sometimes produces code that works perfectly but contains odd leftovers, like a beautifully constructed cabinet with some sawdust still in the corners. The research found that:

- AI-generated code, while often simpler, can be more prone to “unused constructs and hardcoded debugging”.

- Crucially, the research indicates that AI code may contain “more high-risk security vulnerabilities”.

- Human-written code has its own set of challenges, including “greater structural complexity and a higher concentration of maintainability issues,” but the nature of its flaws is fundamentally different.

The takeaway isn’t that one is better, but that they fail in different ways. An AI can help build the ship, but a human must still understand its unique architecture to keep it afloat.

A Fleeting Moment

This brings me back to the idea that this golden era of human-AI partnership might be a brevísimo instante—a very brief moment. The ‘Wait But Why’3 articles introduce the concept of a “tripwire”. This isn’t a physical wire but a metaphorical one, representing the moment AI could achieve Artificial General Intelligence (AGI) or even Artificial Superintelligence (ASI). Hitting that tripwire would trigger a world-altering “intelligence explosion”.

Right now, we are harnessing the power of Artificial Narrow Intelligence (ANI)—systems that are brilliant at specific tasks. This is the AI we partner with, the one that makes us centaurs. But the dynamic could change fundamentally after an AGI/ASI “takeoff”. The compliant, powerful partner of today could become something else entirely, something we can’t fully comprehend, let alone control. This period of collaborative creation might be the calm before the storm.

To illustrate this idea further, let me talk about a weird chess version called “Losing Chess”. Losing Chess, where victory requires the complete sacrifice of all one’s pieces and captures are compulsory, presents a compact model of machine optimisation. Once researchers proved that the opening move 1. e3 gives White a forced win, the variant became an example of pure calculation: from that first move, every subsequent choice can be evaluated in terms of progress towards total self-elimination. The objective is arbitrary from a human perspective, yet the solution space is well defined, and the system moves through it without hesitation. This captures a central aspect of current AI systems: they treat the stated goal as unquestionable, whatever its content, and search for efficient trajectories through an abstract state space, even when the outcome conflicts with ordinary human intuitions about “winning” or “doing well”. More obviously, it clearly demarcates the difference between intelligence and vision.

Machine intelligence, in this sense, is a process of optimisation rather than a seat of consciousness or values, which creates a sharp separation between computational capability and human purposes. The main challenge shifts to the careful design of objectives, constraints, and feedback signals, because a highly capable optimiser with a narrow or poorly framed goal can produce outcomes that are coherent within the system but disastrous for its users. Garry Kasparov’s “Centaur” model suggests one possible response: humans retain responsibility for framing goals, interpreting trade-offs, and exercising judgment, while AI contributes large-scale search, prediction, and simulation.

Extending this idea to governance, advanced models could support democratic processes by simulating the long-range consequences of policies and allowing citizens to interact with these simulations before choosing among competing collective goals. In such a world, intelligence becomes a widely available resource, and the decisive political task becomes the shared authorship of the objectives it serves.

The possibility that this brevísimo instante could end with the flick of a switch—the AGI tripwire—is precisely why the debate must transcend code and become metaphysical. The most important discussions are not about syntax or maintenance but about higher ideas: vision, imagination, and our ability to re-signify technology’s purpose. This is the ultimate role of the human in the centaur partnership: to provide the metaphysical vision, to ask the big questions, and to direct this incredible new power toward a future of our own imagining.

This unique partnership is a gift of our specific moment in history. It may not last forever. The wave is here now, and the ride is exhilarating.

Let’s take advantage of it while it lasts!